Grafana Article Series (II): Tracing with Grafana Agent and Grafana Tempo

This article was last updated on: July 24, 2024 am

👉️URL: https://grafana.com/blog/2020/11/17/tracing-with-the-grafana-cloud-agent-and-grafana-tempo/

✍Author: Robert Fratto • 17 Nov 2020

📝Description:

Here’s your starter guide to configuring the Grafana Agent to collect traces and ship them to Tempo, our new distributed tracing system.

Editor’s note: Code snippets have been updated on 2021-06-23.

Back in March, we introduce The Grafana Agent, a subset of Prometheus, is built to host metrics. It uses a lot of the same battle-proven code as Prometheus, saving 40% of memory usage.

We’ve been adding features to the Agent since launch. New features are now clustering, additional Prometheus exporters, and support for Loki.

Our latest features.Grafana Tempo! This is an easy-to-operate, large-scale, low-cost distributed tracing system.

In this article, we’ll explore how to configure the Agent to collect traces and send them to Tempo。

Configure Tempo support

Adding trace support to your existing Agent configuration file is simple. All you need to do is add onetempo Block. Those familiar with OpenTelemetry Collector may recognize some of the settings in the following code block.

1 | |

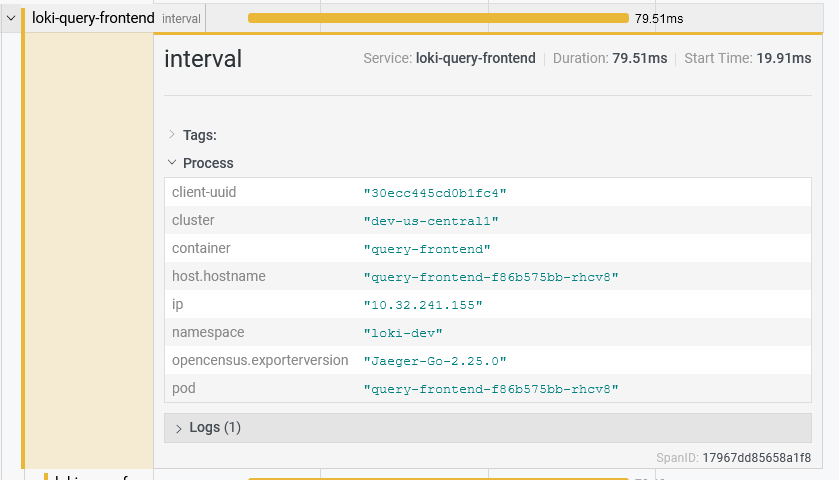

receiverAllows Grafana Agent to accept tracking data from a wide range of systems. We currently support from Jaeger、Kafka、OpenCensus、OTLP and Zipkin Receive span.

While OpenTelemetry Collector allows you to configure metrics and log receivers, we currently only expose tracing-related receivers. We believe the existing Prometheus and Loki support within Agent will meet the needs of the other pillars of observation capabilities.

If you prefer, you can configure the agent to accept data from each receiver.

1 | |

On the other handattributeEnables operators to manipulate labels on incoming spans sent to Grafana Agent. This is really useful when you want to add a fixed set of metadata, such as noting an environment.

1 | |

The configuration example above sets one for all received spans envlabel, whose value is prod。upsertAction means having an existing envlabelspanwill be overwritten. This is useful for ensuring that you know which agent received the span and in which environment it is running.

Attributes are really powerful and support usage beyond the examples here. Please check it out OpenTelemetry documentation on them to learn more.

But at Grafana Labs, we don’t just use a subset of OpenTelemetry Collector; We’ve added a focus on the Prometheus stylescrape_configssupport, which can be used to automatically tag incoming spans based on the metadata of the discovery target.

Discover additional metadata with Prometheus services

Promtail is a logging client that collects logs and sends them to Loki. One of its most powerful features is the service discovery mechanism that supports the use of Prometheus. These service discovery mechanisms enable you to attach the same metadata to your logs and your metrics.

When your metrics and logs have the same metadata, you can reduce the cognitive overhead of switching between systems and give you the “feel” that all your data is stored in one system. **We hope that this capability can also be extended to tracking.

Joe Elliott The same Prometheus service discovery mechanism has been added to the Agent’s tracing subsystem. It works by matching the IP address of the system sending the span to the address of the discovered service discovery destination.

For Kubernetes users, this means that you can dynamically append metadata for the namespace, pod, and container names of the containers that send spans.

1 | |

However, this feature is not only useful for Kubernetes users. All of Prometheus are supported here Various service discovery mechanisms。 This means you can use the same across your metrics, logs, and tracesscrape_configsto get the same set of tags that can easily transform between your observability data when switching from your metrics, logs, and traces.

Configure how the SPAN is pushed

Of course, just collecting spans isn’t very useful! The final part of configuring Tempo support is throughremote_writePart.remote_writeDescribes a Prometheus-like configuration block that controls where collected spans are sent.

For the curious, this is for the OpenTelemetry Collector OTLP exporter of packages. Because the Agent exports span in OTLP format, this means that you can send span to any system that supports OTLP data. Our focus today is on Tempo, but you can even have the Agent send spans to another OpenTelemetry collector.

In addition to endpoints and authentication,remote_writeAllows you to control the queuing and retry capabilities of the span. Batching is inremote_writeIn addition to management, you can better compress the span and reduce the number of outbound connections used to transfer data to Tempo. As before, OpenTelemetry has something in this regard Pretty good documentation。

1 | |

at remote_writeaspectqueuesandretriesAllows you to configure how many batches remain in memory and how long you will retry if a batch happens to fail. These settings are the same as OpenTelemetry’s OTLP exporter targetretry_on_failureandsending_queueThe settings are the same.

1 | |

While it’s tempting to set the maximum retry time high, it can quickly become dangerous. Retries increase the total amount of network traffic from Agent to Tempo, so rather than keep retrying, abandon span. Another risk is memory usage. If your backend fails, high retry times will quickly fill up the span queue and potentially bring down the agent with Out Of Memory errors.

Because 100% of the span is not practical for a system with a lot of span throughput, controlling batching, queuing, and retry logic to meet your specific network usage is critical for effective tracing.

See you next time

We’ve already talked about how to manually configure the Grafana Agent for tracing support, but for a practical example, check it out production-ready tracing Kubernetes manifest。 The configuration that comes with this manifest touches on everything here, including the service discovery mechanism to automatically attach Kubernetes metadata to the incoming span.

I’m very grateful to Joe for taking time out of his busy Tempo job to add tracking support to Agent. I’m glad that Grafana Agent now supports most of the Grafana stack, and I’m more interested in what’s next

The original text is built-in:

The easiest way to get started with Tempo is in Grafana Cloud。 We have both free (including 50GB traces) and paid Grafana Cloud plans to suit a variety of use cases - Register now for free。

glossary

| English | Chinese | Notes |

|---|---|---|

| Receivers | Receiver | Grafana Agent component |

| Trace | Tracking | |

| span | Span | Tracing proper noun |